This part of the Psychology 101 series of posts is about thought and reasoning – the ways we transform and manipulate mental representations to navigate our way through life. Thoughts manifest, and the power of thoughts should not be underestimated. This is why it is such a big area of interest in the field of psychology. The following post will explore the basic units of thought, such as mental images and concepts, and the way people manipulate these units to reason, solve problems and make decisions. Next we examine implicit and everyday thinking, exploring how people solve problems and make judgements outside of awareness, often relying on emotion as well as cognition.

Table of Contents

Units of Thought

In many ways, thought is simply an extension of perception and memory. When we perceive, we form a mental representation. When we remember, we try ton bring that mental representation to mind. When we think, we use representations to try to solve a problem or answer a question; often when we think we are actually just evaluating and organising our new and existing mental representations. Thinking means manipulating mental representations for any purpose.

Thinking in Words and Images

Most of the time, humans think with either word or images, or a combination of the two. The images that we conjure up in our mind are called mental images, such as images of our ideal holiday destination, or what we usually eat for breakfast.

People also frequently think using mental models, representations that describe, explain or predict the way things work. Mental models can be quite simple, like most people’s understanding of cars (if it doesn’t start, something must be wrong under the hood) or a child’s understanding of a cavity (a hurt tooth that means I have to go the dentist). On the other hand, mental models can be quite complex, such as the mental models used by the mechanic to troubleshoot a car, or a dentist’s understanding of how cavities are formed. While mental models often include visual elements, they also always include descriptions of the relationships among certain elements.

Concepts and Categories

Before people can think about an object, they usually have to classify it so that they know what it is and what it does. An approaching person is either a friend, stranger or enemy, a guitar is either acoustic or electric, a piece of food on the table is either a fruit or a vegetable, if it’s a fruit, that fruit is either an apple or an orange and so on. People will not think about an object until they have placed it in a mental drop down list of possible categories.

A concept is a mental representation of a category; that is, an internal portrait of a class of objects, ideas or events that share common properties. Some concepts can be visualised, but a concept is broader than it’s visual image. For example, the concept car stands for a class of transport vehicles with four wheels, seating space for at least one driver and one passenger and a generally predictable shape (schema). Other concepts, like honesty, defy visual representation, although they may have visual associations, such as an image of an honest face, or a person you view as being quite honest.

The process of identifying an object as being a part of a category is called categorisation. Categorisation is essential to thinking, because it allows people to make inferences about objects. For example, if I classify the drink in my glass as an alcoholic beverage, I am more likely to make assumptions about how many I can drink and what I will feel like afterwords.

For years psychologists and philosophers have wrestled with the question of how people categorise objects or situations. How do they decide that a crab is not a spider for example? One possibility is that people compare the features of objects with a list of defining features – qualities that are essential, or necessarily present, in order to classify the object as a member of the category. For example: spiders are usually a dark brown or black colour, covered in fur, have a big abdomen and can crawl in any direction, while crabs are usualy orange or red in colour, have a hairless shell, have a plate shaped body and can only crawl sideways – and they have pincers! These defining features ensure that we never confuse a crab for a spider. These are well-defined concepts – they have properties clearly setting them apart from other concepts.

Most of the concepts used in daily life, however, are not easily defined. Consider the concept good. This concept takes on different meanings when applied to a meal or a person: very few of us look for tastiness in humans, and sensitivity in a meal. Similarly, the concept adult is hard to define, at least in Western cultures: at what point does a person stop being an adolescent and become an adult? Is it when they reach a certain age, or when they realise a certain quality in their behaviour?

People tend to classify objects rapidly by judging their similarity to concepts stored in memory, this is the reason why a person would almost instantly recognise a parrot or a pigeon as a bird, but might take longer to recognise a penguin as a bird. People classify in this way by referring to a prototype, which is basically an outline of an object used to compare all other object in the same category. For example, when people construct a prototype in their minds about birds, the image is not necessarily of any bird in particular, but rather a rough sketch contains all of the defining features (shape, size and colour etc).

Hierarchies of Concepts

Many concepts are hierarchically ordered, with further sub categories branching off from the main ones. Efficient thinking requires choosing the right level of category on the hierarchy. A woman walking down the street in a bright yellow raincoat belongs to the category mammal, and human, just as clearly as she belongs to the sub category woman. We are more likely to say ‘Look at that woman in the yellow raincoat’ than ‘Look at that mammal in a brightly coloured artificial skin’.

The level people naturally tend to use in categorising objects is known as the basic level: the broadest, most inclusive level at which objects share common attributes that are distinctive of the concept (for example: woman, car, cat, dog, house). The basic level is the level at which people categorise most quickly, which is why it is natural to gravitate towards using it.

At times, however, people categorise at the subordinate level, the level of categorisation below the basic level in which more specific attributes are shared by members of a category. This is the level where people distinguish between objects that fall under the basic category, for example a bird watcher will distinguish between a blue bird and a hummingbird.

People also sometimes classify objects at a superordinate level, which is an abstract level in which members of a category share few common features. A farmer, for example, may ask, ‘are the animals in the barn?’ rather than list all of the animals that should be in the barn. The superordinate level is one level more abstract than the basic level, and members of this class share fewer specific features.

The hierarchy can be more easily explained by looking at the above diagram, here it is visually apparent how the system works. In this example, mammals are classified as superordinate, dog is grouped at the basic level, while golden retriever, dalmation and siberian huskey (all different breeds of dog) are linked to the subordinate level.

Reasoning

Reasoning refers to the process by which people generate and evaluate arguments and beliefs. Philosophers have long distinguished between two kinds of reasoning: inductive and deductive. We examine each separately here, and will then explore one of the most powerful mechanisms people use to make inferences, particularly about novel situations: reasoning by analogy.

Inductive reasoning is reasoning from specific observations to more general propositions. An inductive conclusion is not necessarily true because its underlying premises are only probable, not certain. For example, say you asked a friend who appeared to be quite upset if they were feeling ok, and they replied ‘yes I’m fine’, inductive reasoning could lead you to the conclusion ‘if my friend says she is fine, then she must be fine’. It is simply reasoning made by observation. Another example of inductive reasoning would be a child who believes that ‘if Santa can climb down the chimney, so can the boogey man!’ Nevertheless, inductive reasoning forms a large chunk of our day to day reasoning. If someone raises their voice we reason that they must be angry, if someone looks sad, we reason that something must be wrong, if everything appears the same as it did yesterday, we reason that everything is the same as yesterday.

Deductive reasoning is logical reasoning that draws a conclusion from a set of assumptions, or premises. In contrast to inductive reasoning, it starts off with an idea rather than an observation. In some ways, deduction is the flipside of induction: whereas induction starts with specifics and draws general conclusions, deduction starts with general principles and makes inferences about specific instances. For example, if you understand the general premise that all dogs have fur and you know that your next door neighbour just bought a dog, then you can deduce that your neighbour’s dog has fur, even though you haven’t seen it yet.

This kind of deductive argument is referred to as a syllogism. A syllogism consists of two premises that lead to a logical conclusion. If it is true that:

A) All dogs have fur and

B) The neighbour’s new pet is a dog.

Then there is no choice but to accept the conclusion that:

C) The neighbour’s new dog has fur.

Unlike inductive reasoning, deductive reasoning can lead to certain rather than simply probable conclusions, as long as the premises are correct and the reasoning is logical.

Deductive reasoning seems as though it would follow similar principles everywhere. However, recent research suggests that Eastern and Western cultures may follow somewhat different rules of logic – or at least have different levels of tolerance for certain kinds of inconsistency. The tradition of logic in the West, extending from Ancient Greece to the present, places an enormous emphasis on the law of non-contradiction: two statements that contradict each other cannot both be true. This rule is central to solving syllogisms. Contrastingly however, in the East, people view contradictions with much more acceptance, and often believe them to contain great wisdom. Take for example Zen Koans (statements or questions) that offer no rational solutions, and are often paradoxical in nature, such as: if a tree falls in the forest, and no one is around to hear it, does the tree make a sound?’ Western thought aims to try and resolve contradiction by using logic, while people in the East focus instead on the truth that each statement provides – relishing, rather than resolving paradox.

A humorous example of this difference can be found in an episode of South Park, where Stan and Kyle are trying to locate and destroy ‘the heart of Wal-Mart’ so it will lose it’s power over South Park’s inhabitants. In this exchange of dialogue, Stan and Kyle represent Western rational thought, while the embodiment of Wal-Mart represents Eastern paradoxical thought:

STAN: We don’t want your store in our town; we’ve come to destroy you!

KYLE: Where’s the heart?

WAL-MART: To find the heart of Wall-Mart, one must first ask oneself, “Who is it that asked the question?”

STAN: Me. I’m asking the question.

WAL-MART: Ah, yes, but who are you?

STAN: Stan Marsh. Now where is the heart?

WAL-MART: Ah, you know the answer, but not the question!

KYLE: The question is: ‘Where is the heart?’

In this exchange of dialogue you can see that Stan/Kyle, the Western rational thinkers, have a low tolerance for all of Wal-Mart’s philosophical questions and are answering them with straight forward logic, even though these are the not the answers that Wal-Mart (Eastern thought) seek. To Eastern thought, the question is generally more important than the answer, just as the journey is viewed as being more important than the destination.

Reasoning by Analogy

Analogy is one of the single most powerful reasoning devices we have, and we use it a lot in everyday conversation to explain new situations. Analogical reasoning is the process by which people understand a novel situation in terms of a familiar one. For example, someone who has never done heroin might ask someone who has what the sensation is like; considering the person asking the question has never tried it, the heroin user would have to use analogy to create a comparison. He might say, it is like sinking into a hot bath on a freezing cold day. Since the person who asked the question can relate to the analogy (let’s assume they both live in a very cold country), through analogical reasoning he can deduce what it must feel like to be on heroin. Similarly, someone with schizophrenia could use analogical reasoning to suggest that having schizophrenia is like living in a nightmare, and not being able to wake up.

A key aspect of analogies of this sort is that the familiar situation and the novel situation must each contain a system of elements that can be mapped onto one another. For an analogy to take hold, the two situations need not literally resemble each other, however, the elements of the two situations must relate to one another in a way that explains how the elements of the novel situation are similar to that of the unfamiliar situation.

Problem Solving

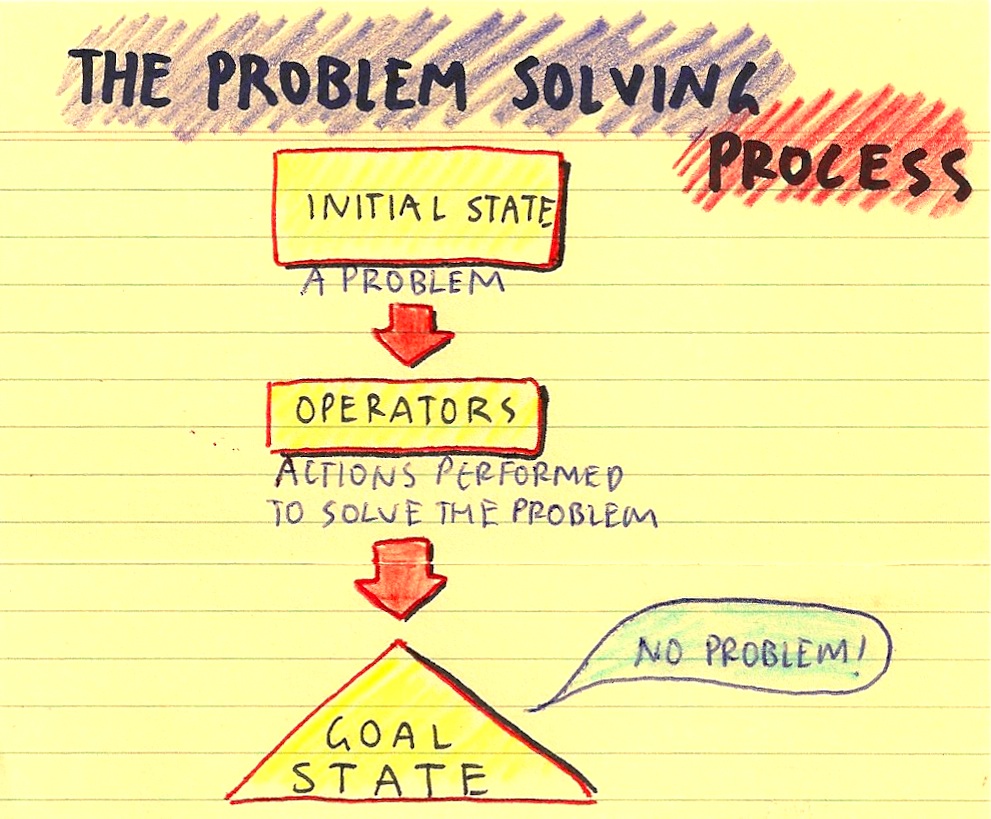

Problem solving refers to the process of transforming one situation into another to meet a goal. The aim is to move from a current, unsatisfactory state (the initial state) to a state in which the problem is resolved (the goal state). To get from the initial state to the goal state, the person uses operators, mental and behavioural processes aimed at transforming the initial state until it eventually approximates the goal.

In well-defined problems, the initial state, goal state and operators are easily determined. Maths problems are examples of well-defined problems. However, few problems are so straightforward in life; ill-defined problems occur when both the information needed to solve them and the criteria for determining when the goal has been met are vague.

Solving a problem, once it has been clarified, can be viewed as a four-step process.

- Step one is to compare the initial state with the goal state to identify precise differences between the two.

- Step two is to identify possible operators and select one that seems most likely to reduce the differences.

- Step three is to apply the operator/s, responding to challenges and roadblocks, by establishing subgoals – minigoals on the way to achieving the broader goal.

- The fourth and final step is to continue using operators until all differences between the initial state and the goal state are eliminated.

Problem solving would be impossible if people had to try every potential operator in every situation until they found one that worked. Instead, they employ problem-solving strategies, techniques that serve as guides for solving a problem. For example, algorithms are systematic procedures that inevitably produce a solution to a problem. Computers use algorithms in memory searches, as well as when a spell-check command compares every word in a file against an internal dictionary. Humans also use algorithms to solve some problems, such as counting the number of guests coming to a barbeque and multiplying by two to determine how many sausages to buy.

One of the most important problem-solving strategies is mental simulation – imagining the steps involved in solving a problem mentally before actually undertaking them. Mental simulation is very useful for gauging the possible consequences of your actions and it can help you plan how to attack a problem. Also, visualising the steps towards solving a problem is one step closer to actually carrying these steps out.

A common problem with human problem-solving is functional fixedness, which is the tendency for people to ignore other possible functions of an object when they have a fixed function in mind. In a classic experiment, known in cognitive psychology circles as the ‘candle problem’, participants were asked to mount a candle to a wall so that, when lit, no wax would drip on the floor. On a table lay a few small candles, some tacks and a box of matches. The tendency of course, was to see a matchbox as only a matchbox. If the matches were out of the box, however, participants solved the problem more easily.

This is very similar to another obstacle to problem solving known as mental set, the tendency to keep using the same problem-solving techniques that have worked in the past, even when better alternatives are obvious.

Another common error in problem-solving is confirmation bias, which is the tendency for people to only seek information or solutions to problems that confirm their pre existing ideas. Their bias limits them in their problem solving abilities because they will refuse to accept anything that doesn’t conform to what they believe. For example, a religious fanatic will deny any solutions to a problem that are in contradiction to their religious beliefs, and will only seek solutions that confirm these beliefs.

Decision Making

Just as life is a series of problems to solve, it is also a series of decisions to make, from the simple ‘do I feel like cereal, or toast for breakfast today?’ to the more complex ‘what career is right for me?’ Decision making is the cognitive process where a person makes a single choice or course of action amongst several alternates, usually by weighing the negative and positive attributes of each possible choice. Here are some decision making techniques that people typically use:

- Pros and cons -listing the negative and positive outcomes of each option, popularised by Plato.

- Simple prioritisation – choosing the alternative with the highest probability weighted value for each alternative.

- Satisficing – using the first acceptable option found.

- Following orders.

- Flipism – leaving the decision to chance, usually by flipping a coin.

- Prayer, tarot cards or any other form of divination.

- Doing the opposite.

[youtube=http://www.youtube.com/watch?v=cKUvKE3bQlY]

In the above clip of Seinfeld, George Costanza, realising that his life is the exact opposite of what he had hoped to achieve, decides to do the opposite of everything he usually does with striking results!

Explicit and Implicit Thinking

Explicit cognition, which is cognition that involves conscious manipulation of representations is only one form of thinking that has been covered so far. More often that not, people rely on cognitive shortcuts known as heuristics, which allow people to make rapid, but sometimes irrational judgements. One example is the representativeness heuristic, in which people categorise by matching the similarity of an object or incident to a prototype but ignore information about its probability of occurring. For example, a mother might use a representativeness heuristic to make snap judgements about a heavy metal concert based on a violent outbreak she read about in the paper. She won’t allow her son to go the concert because she believes he will get hurt, she has made this decision rapidly by using a heuristic, but has failed to acknowledge the probability that violence will actually occur at the concert and her son will get hurt.

Another heuristic that is commonly used is the availability heuristic, which is where people infer the frequency of an event occurring based on how easily an example can be brought to mind. That is, people essentially assume that events they can recall are typical. Growing up in a media centered society where the news is very selective about what sort of stories they cover fuels our tendency to use an availability heuristic. For example, an interesting statistic states that “falling coconuts kill 150 people worldwide each year, 15 times the number of fatalities attributable to sharks’. Despite this knowledge, people are more likely to think of sharks as being more dangerous than coconuts. If you were to take a lonely stroll through a beach under some coconut trees, no one would think twice to warn you, but if you were to go surfing alone.. chances are you, or someone else is going to be thinking about sharks.

We assume that shark attacks are more common than coconut attacks because we hear about it more, if one person gets killed, or even attacked by a shark, everyone will hear about it on the news, while if someone gets damaged or killed by a falling coconut, no one hears about it. Movies are also never made about falling coconuts, while films like Jaws and Deep Blue Sea exist to play on our fears of giant man eating sharks. This is an example of an availability heuristic, as we are more likely to think that shark attacks are more frequent than they actually are, due to how easily we can think about their occurrence.

Because of our tendency to make decisions without examining all of the information and facts available, researchers have suggested that human thought is highly susceptible to error. Underlying this view is the notion of bounded rationality, that people are rational within the bounds imposed by their environment, goals and abilities. Thus, instead of making optimal judgements, people typically make good enough judgements. Herbert Simon (1956) called this satisficing, a combination of satisfying and sufficing. When we choose a place to have dinner for example, we don’t not go through every restaurant in the phone book, weigh up the nutritional value of certain foods and glance through hundreds of menus. Instead we go through a list of restaurants that come to mind and choose the one that seems the most satisfying at the moment, often this boils down to whether we feel like McDonalds or KFC.

The classic model of rationality emphasises conscious reflection. Yet many of the judgements and inferences people make occur outside of awareness, that is they just appear in our minds without actively thinking about them, this is known as implicit cognition, or cognition outside our awareness. Most learning occurs outside awareness, for example how long to hold eye contact with a friend compared with a stranger, or how long to hug somebody or how firm to shake someone’s hand, none of these things are taught explicitly to us, but we learn all these things anyway – implicitly through direct and indirect observation. We learn these behaviours without ever really consciously thinking about them.

Implicit problem solving can also occur, when an answer ‘hits you’ days after you may have given up trying to solve it. Implicit problem solving of this sort occurs through the activity of associational networks, as information associated with unresolved problems remain active outside awareness. Information related to these unsolved problems appear to remain active for extended periods. Over time, other thoughts or environmental cues that occur throughout the day are likely to spread further activation to parts of the network. If enough activation reaches a potential solution, it will force the answer free and catapult it into consciousness. This might explain what happens when people wake up from a dream with the answer to a problem, since elements of the dream can also spread activation to networks involving the unsolved problem. So if you have a problem that you just can’t solve, just sleep on it!

Emotion, Motivation and Decision Making

A common area of discussion amongst psychologists and philosophers is the way reason can be derailed by emotion. Numerous studies have pointed to ways that emotional processes can produce illogical responses. For example, people are more likely to be upset if they miss a winning lottery ticket by one number rather than missing all of the numbers, because they feel as though they only just missed winning by a margin. When in reality, missing one number has the exact same consequences as missing all the numbers: you don’t win, you lose.

Many of the decisions people make in everyday life stem from their emotional reactions and their expected emotional reactions. This is often apparent in the way people assess risks. Judging risk is highly subjective, which leads to some intriguing questions about precisely what constitutes ‘rational’ behaviour. For example, in gambling situations, losses tend to influence people’s behaviour more than gains, even when paying equal attention to the two would yield the highest average pay off. Consider the following scenario. A person is offered the opportunity to bet on a coin flip. If the coin comes up heads, he wins $100; if tails, he loses $80. From the standpoint of expected utility theory, the person should take the bet, because on average, this coin flip would yield a gain of $20. However, common sense suggests otherwise. In fact, for most people, the prospect of losing $80 is more negative than winning $100 is positive. Any given loss of x dollars has greater emotional impact than the equivalent gain.

This is called prospect theory, which suggests that the value of future gains and losses to most people is asymmetrical, that is losses have a greater emotional impact than gains. Given the ambiguity of risk, it is not surprising that motivational and emotional factors play an important role in how people assess it. Although prospect theory and other approaches to risk assessment describe the average person, people actually differ substantially in their willingness to take risks, and their enjoyment out of it; some people are motivated by fear, while others are motivated by excitement or pleasure. These differences appear to reflect differences in whether a person’s nervous system is more responsive to norepinephrine (regulates fear responses), or dopamine (regulates pleasure).

Connectionism

Connectious says…psychology is in the midst of a ‘second cognitive revolution’. This revolution has challenged the notion that the mind is a conscious, step at a time information processor that functions like a computer. One of the major contributors to this revolution is an approach to perception, learning, memory, thought and language called connectionism, or parallel distributed processing (PDP), which asserts that most cognitive processes occur simultaneously throughout the action of multiple activated networks. PDP models emphasise parallel rather than serial processing. Human processing is simply too fast and the requirements of the environment too demanding for serial or one by one processing to be our primary mode of information processing.

Secondly, according the PDP models, the meaning of a representation is not contained in some specific warehouse in the brain. Rather, it is spread out, or distributed, throughout an entire network of processing units (nodes in the network) that have become activated together through experience. Each node attends to some small aspect of the representation to create a whole concept. For instance, when a person comes across a barking dog, her visual system will simultaneously activate networks of neurons that have previously been activated by animals with two ears, four legs and a tail. At the same time, auditory circuits previously turned on by barking will become active. The simultaneous activation of all these neural circuits identifies the animal with high probability as a dog. The tendency to settle on a cognitive solution that satisfies as many constaints as possible to best fit the data is called constraint satisfaction. With the above example, a four legged creature with a tail could be a dog or a cat, but if it starts barking, barking will further activate the dog concept and inhibit the cat concept, because the neurons representing barking spread activation to networks associated with dogs and spread inhibition to networks associated with cats.

That’s all for this post on thinking and reasoning, I hope it got you thinking!

Check Out Part 4 of Psychology 101: Language!

Other guides in the Psychology 101 series: